How to Choose the Right USB Camera for Your Edge AI Project: A 2026 Engineer's Guide

Introduction: Your Algorithm is Brilliant. Is Your Vision?

Your Edge AI model is trained, optimized, and ready to deploy. But in the real world, its performance depends entirely on the quality of the data it receives. For any embedded vision project, your camera isn’t just a component—it’s the source of truth. A poor choice here can become the bottleneck for your entire system.

The challenge many engineers face is selecting a camera that balances resolution, autofocus, form factor, and software integration for platforms like NVIDIA Jetson or Raspberry Pi. This blog provides a practical framework: critical decision factors, real-world scenarios, and a product recommendation tailored for Edge AI success.

The New Reality: Why Edge AI Demands a New Class of Camera

The "Why Now" Drivers

The Consequence

This shift has created urgent demand for a new class of vision sensor: compact yet powerful,

versatile yet easy to integrate. This is where high-performance embedded USB cameras excel.

The 4 Critical Decision Factors for Your Edge AI Camera

Factor 1: Resolution vs. AI Model Requirements – More Isn’t Always Better

A 12MP sensor captures immense detail, but also burdens the edge processor. A 2MP sensor, in contrast,

runs far faster.

Guideline:

In short: match your camera resolution to your AI inference pipeline.

Factor 2: The Autofocus Imperative – From Blurry Data to Sharp Insights

Fixed-focus cameras are a liability when working with dynamic distances. In robotics, a blurry frame means misidentifying parts; in healthcare, it means missed anomalies.

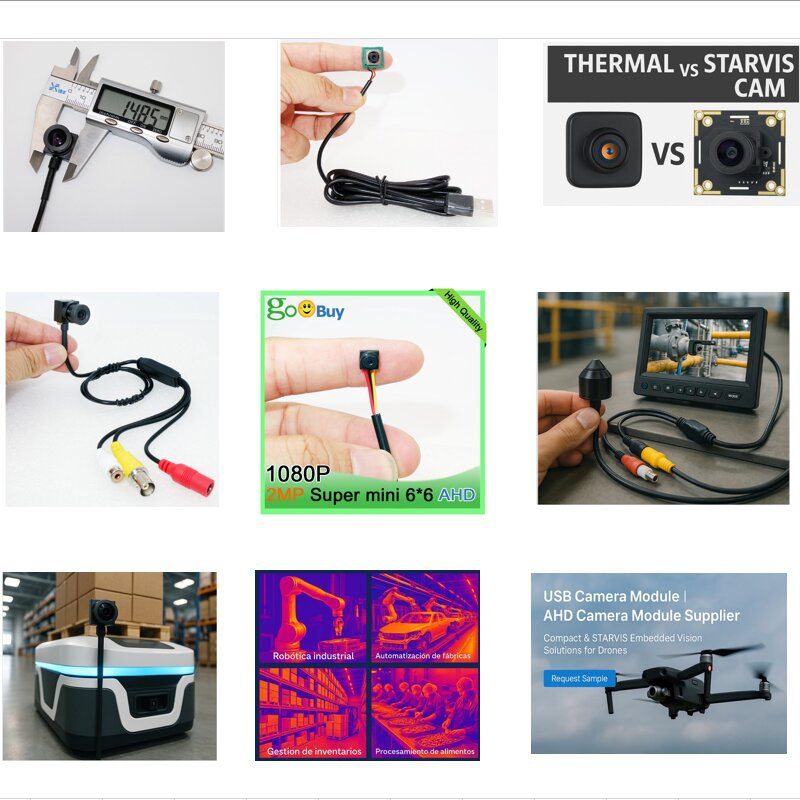

Our 15×15mm micro USB autofocus cameras eliminate this risk, adjusting seamlessly whether the target is 10cm

or 1 m away. For barcode scanning, robotic bin-picking, or medical devices, autofocus ensures your AI receives sharp, usable frames every time.

Factor 3: The Size-to-Performance Ratio – Why Miniaturization is Critical

As embedded systems shrink, space becomes the ultimate constraint. Drone gimbals, robotic end-effectors, and handheld inspection tools cannot accommodate bulky vision systems.

Here lies the advantage of our miniature USB cameras: the 15×15mm form factor packs full resolution,

autofocus, and UVC compliance into a package small enough to fit anywhere.

Factor 4: UVC Compliance – The Key to Rapid Prototyping and Deployment

Proprietary SDKs and drivers slow engineers down. A true UVC-compliant USB camera works out-of-the-box

with Windows, Linux, macOS, Jetson, and Android.

This reduces time-to-market and lowers development costs—a decisive advantage for startups and established

OEMs alike.

Application Scenarios: Matching Our Modules to Your Mission

Scenario 1: High-Detail Industrial Inspection (USA)

A Michigan electronics company was developing an Automated Optical Inspection (AOI) machine.

Their goal: detect microscopic soldering defects on PCBs.

Scenario 2: High-Speed Logistics & Retail (Europe)

A German logistics hub deploying self-checkout kiosks faced barcode scanning issues across varied

distances and angles.

Scenario 3: Smart IoT & Presence Detection (Smart Home Sector)

A French smart home OEM needed low-cost sensors for presence detection in home security systems.

Your Solution: The Novel Electronics Micro USB Camera Series

At Shenzhen Novel Electronics Limited, we developed the 15×15mm USB camera series as a unified,

scalable platform for Edge AI.

Comparison Table

|

Model |

Resolution |

Best Use Case |

Key Advantage |

|

2MP USB |

1920×1080 |

IoT, presence detection |

Low power, low cost |

|

5MP USB |

2592×1944 |

Logistics, kiosks |

Balanced speed + detail |

|

8MP USB |

3264×2448 |

Robotics, drones |

Wider field + HDR |

|

12MP USB |

3840×2160 |

AOI, precision inspection |

Extreme detail + autofocus |

This unified architecture means you can scale from entry-level IoT sensors to precision industrial

vision without redesigning your mechanical or base software stack.

Conclusion: Your Vision, Accelerated

Choosing the right camera is a strategic decision that directly influences your Edge AI’s success.

It’s about striking the perfect balance between resolution, size, autofocus, and integration ease.

Our micro USB camera modules are engineered for exactly this balance—compact, versatile,

and ready to integrate into your embedded AI systems.

Call to Action

Ready to future-proof your Edge AI device? Get our free camera module comparison chart,

or contact our engineers for a tailored consultation.